New Selfhoods: Reflections in the Black Mirror-Pond

Artificial intelligence feels like the new Big Bad Wolf of sociology. Or maybe even the academy at large. There’s very little agreement among us about how to even begin to approach it, largely because its rapid ascension into the limelight has called into question many of the core frameworks that underlie our disciplines. In the spirit of trying to cling onto the few things that we have left that we can supposedly call our own, my contribution to this already very dense discourse around this topic is this: I’ve noticed that AI seems to rouse a lot of big, specific feelings in academics — namely enthusiasm, skepticism/fear, and grief. There are more, of course, but these ones stand out to me because they point out three very distinct, reflexive processes that we seem to be confronting.

1. Enthusiasm. There are many — many! — that have approached the advent of AI with ravish. Enthusiasm plays a large role in keeping any kind of market bubble inflated, but my hunch is that the enthusiasm around AI is a very different kind of enthusiasm than around other products or concepts that have dominated our industrial realities. Folks who talk about it talk about it with a kind of anticipatory joy rather than a joy that has already been experienced; in other words, the fervor around AI seems to lie almost exclusively in its possibilities rather than its realities (though this also just sounds, generally, like the ethos of Silicon Valley). The Sam Altmans of the world have made their visions, once limited to boardroom pitches to venture capitalists and late nights in back-alley startup offices, accessible to the common man. I’m not sure that I feel great about the democratization of this feeling. It honestly reminds me of whatever it is that consumes your stomach right before the first downhill of a roller coaster ride. But funders, whether they like thrill rides or not, appreciate this sort of thing, and we already see forms of this creeping into the world of social science research through rapidly shifting approaches to grants, papers, and research agendas at large. We also have nowhere near the level of infrastructure that the more obviously-for-profit world has to be able to properly contend with it, but perhaps that’s a problem (or a solution) for tomorrow.

2. Skepticism and Fear. Sociologists have always been wary about tackling technological development writ large; the field, since the dawn of the Golden Age of Online, has desperately been playing catch-up in trying to integrate these advances into social theory and ideologies of being. Technological logics and consequences fundamentally threaten a lot of the basic foundations of world-building that entire generations in this field have taken for granted. How can it be possible, for example, that “digital community” exists both within and outside a “physical community?” How is something both a space and not a space? How do you study a space-not-space that exists in a virtual vacuum with no physical bodies? While I do think this kind of skepticism is very warranted, I also think that letting this turn into intellectual reductionism will be the downfall of this field. There are individuals out there who are capable of contending with things like memetic evolution or the Manosphere or buy-nothing groups or rage chambers with the same brilliance that the “founders” of this discipline (e.g. Weber) did with their own pressing realities. In fact, I think they’re already here, and that they’re already doing this work. I feel like I even know who some of them are. But this field has a dark way of suppressing brilliance, particularly when it emerges as a transdisciplinary or provocative take. And I don’t think that there are enough folks yet who are willing to take the step to lean into rather away from this fear — particularly around the painful, strange world of AI — to hold these things the way that they probably should be held.

3. Grief. I spend a lot of time here. While writing this post, I kept thinking about the story of how Narcissus withered away at a pond as he stared at his own reflection. I’ve always heard this as an allegory about the dangers of vanity, but after a cursory reread of the text, I don’t think that I support this interpretation anymore. A priest, Tiresias, actually goes to warn Narcissus’s mother long before this incident happens. “As long as your son never truly recognizes who he is,” he says, “he will live a long life. The alternative is not so great.” Narcissism, as it follows, isn’t just empty-headed dissociation: it is a dangerous and sad process of self-recognition.

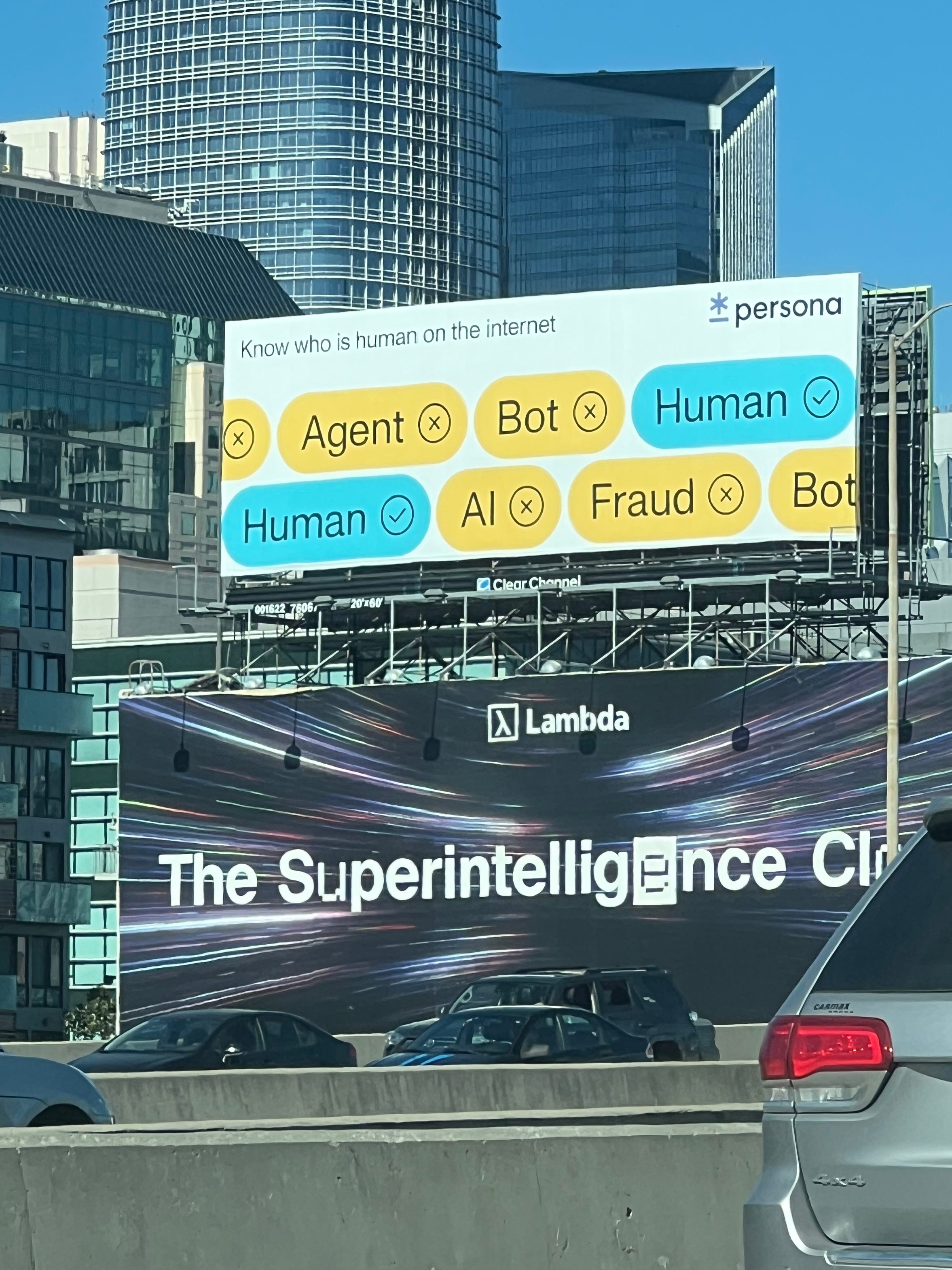

I feel like Narcissus a lot these days when I sit at my computer and grade LLM-generated essays that are objectively well-written, or when I ask ChatGPT how to unscrew the top of my vacuum cleaner so I can get in and clean the dust chamber for the fifteenth time, or when I hear about algorithmic replications of racial bias and its steady advancement into policing infrastructure. I’ve cut down on my own use of em-dashes to prove that I am me. I don’t know how to respond when my father sends me a picture he’s generated of me in space after reading the news about the Artemis launch: “Look, it’s you in space!” It becomes harder and harder each day to understand where the mouth ends and the body starts: the other day, I went to a meeting about addressing cases of academic integrity and plagiarism, and I felt like a zoologist being trained on endangered species identification. Prove that you are alive. Prove that your aliveness is sufficient. Sniff out flowery language, synecdoches, dependent-independent clause reliance in sentence construction, even if you learned, in a different time, that these things were you, and that your writing was you. The way you write — who you are — is no longer you. You are no longer yours.

No wonder why he couldn’t look away.